digital signal processing (DSP)

What is digital signal processing (DSP)?

Digital signal processing (DSP) refers to various techniques for improving the accuracy and reliability of digital communications. This can involve multiple mathematical operations such as compression, decompression, filtering, equalization, modulation and demodulation to generate a signal of superior quality.

What is digital signal processing used for?

The theory behind DSP is quite complex. DSP can clarify or standardize digital signals, but it can also perform various other tasks, such as filtering, compression and modulation. DSP algorithms can also help differentiate between orderly signals and noise, but they are not always perfect.

All communications circuits contain some noise. This is true whether the signals are analog or digital, regardless of the type of information conveyed. Noise is the eternal bane of communications engineers, who are constantly striving to find new ways to improve the signal-to-noise (S/N) ratio in communications systems. Traditional methods of optimizing the S/N ratio include increasing the transmitted signal power and increasing the receiver sensitivity. In wireless systems, specialized antenna systems can also help.

Digital signal processing dramatically improves the sensitivity of a receiving unit. The effect is most noticeable when noise competes with a desired signal. A good DSP circuit can sometimes seem like an electronic miracle worker, but there are limits to what it can do. If the noise completely overwhelms the signal, a DSP circuit cannot recover any useful information.

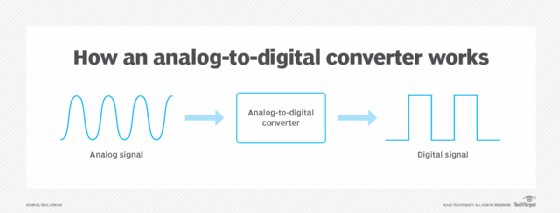

If an incoming signal is analog, the signal is first converted to digital form by an analog-to-digital converter. The resulting digital signal has two or more levels. Ideally, these levels are always predictable, with exact voltages or currents. However, because the incoming signal contains noise, the levels are not always at the standard values. The DSP circuit adjusts the levels so that they are at the correct values. This practically eliminates the noise. The digital signal is then converted back to analog via a digital-to-analog converter. Similarly, DSP can directly process the signal for digital signals to eliminate noise and minimize errors.

DSP is not just used in communications systems. It is a versatile technology that permeates numerous domains, including processing signals for audio and speech, sonar and radar systems, sensor arrays, and spectral analysis. It further extends its reach to statistical data processing, image enhancement, telecommunications, system controls and even the biomedical field for signal interpretation.

Furthermore, digital signal processing with Python programming enables the use of Python's powerful libraries and packages to analyze, manipulate and transform digital signals. Python's readability, simplicity and breadth of scientific computing libraries make it a favored choice among professionals and researchers.

What is a digital signal processing system?

A DSP system is a device or setup that performs DSP operations. For example, it can involve software such as algorithms running on a computer or hardware such as circuits or specialized chips. It can also be a combination of both.

DSP systems are used in an array of applications, such as the following:

- Audio and speech processing to enhance sound quality, speech recognition and digital synthesizers.

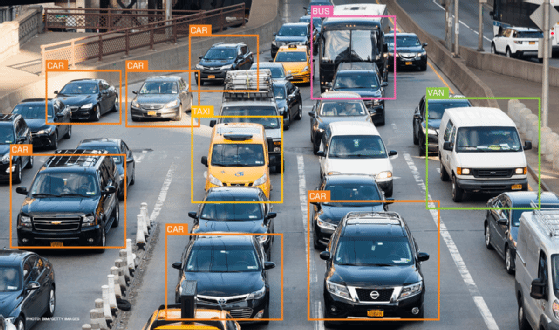

- Image and video processing, including image enhancement and restoration, image recognition, and digital video broadcasting.

- Radar and sonar, which use DSP techniques for remote sensing and to extract useful information from the signals.

- Telecommunications systems, which use DSP for data compression and decompression, error detection and correction, and modulation and demodulation.

- Biomedical engineering systems, including medical image processing, and signal processing for electrocardiograms and electroencephalograms.

- Seismology devices, which use DSP to process data from seismic instruments to interpret the status of Earth's interior.

What is digital signal processing for audio?

Various techniques are used to improve audio quality and extract meaningful information. In music production, DSP can enhance the quality of audio recordings, create new sounds and correct problems with audio signals.

Some additional examples of how DSP is used in audio applications include the following:

- Noise reduction to reduce unwanted noise from audio signals using a noise gate that removes all audio below a certain threshold. Other noise reduction techniques include spectral subtraction and adaptive filtering.

- Equalization to adjust the frequency response of an audio signal to improve the sound quality of an audio recording or create a specific sound effect.

- Compression to reduce an audio file's size to make it easier to store and transmit, or to improve the sound quality of audio signals by reducing the dynamic range.

- Reverb to create the effect of an audio signal being played in a large, reflective space.

- Pitch correction to correct the pitch of an audio signal, correct out-of-tune vocals or create a specific sound effect.

What is digital signal processing in Adobe Audition?

Adobe Audition uses the principles of DSP to offer features that enable precise control over audio signals. These functionalities range from basic operations, such as amplification, equalization and panning, to advanced techniques, including noise reduction, time stretching and frequency spectral editing.

Learn about 10 common uses for machine learning applications in business, including image and speech recognition. Explore five embedded system terms -- among them, digital signal processing -- IoT admins must know.