deepfake AI (deep fake)

What is deepfake AI?

Deepfake AI is a type of artificial intelligence used to create convincing images, audio and video hoaxes. The term describes both the technology and the resulting bogus content, and is a portmanteau of deep learning and fake.

Deepfakes often transform existing source content where one person is swapped for another. They also create entirely original content where someone is represented doing or saying something they didn't do or say.

The greatest danger posed by deepfakes is their ability to spread false information that appears to come from trusted sources. For example, in 2022 a deepfake video was released of Ukrainian president Volodymyr Zelenskyy asking his troops to surrender.

Concerns have also been raised over the potential to meddle in elections and election propaganda. While deepfakes pose serious threats, they also have legitimate uses, such as video game audio and entertainment, and customer support and caller response applications, such as call forwarding and receptionist services.

How do deepfakes work?

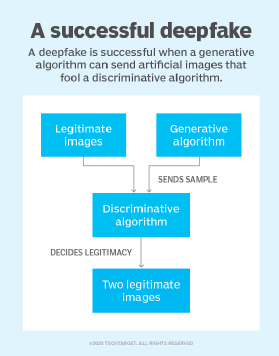

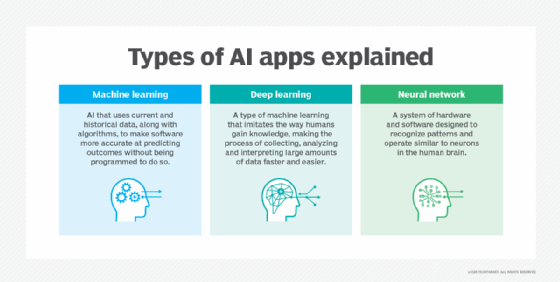

Deepfakes uses two algorithms -- a generator and a discriminator -- to create and refine fake content. The generator builds a training data set based on the desired output, creating the initial fake digital content, while the discriminator analyzes how realistic or fake the initial version of the content is. This process is repeated, allowing the generator to improve at creating realistic content and the discriminator to become more skilled at spotting flaws for the generator to correct.

The combination of the generator and discriminator algorithms creates a generative adversarial network. A GAN uses deep learning to recognize patterns in real images and then uses those patterns to create the fakes. When creating a deepfake photograph, a GAN system views photographs of the target from an array of angles to capture all the details and perspectives. When creating a deepfake video, the GAN views the video from various angles and also analyzes behavior, movement and speech patterns. This information is then run through the discriminator multiple times to fine tune the realism of the final image or video.

Deepfake videos are created in one of two ways. They can use an original video source of the target, where the person is made to say and do things they never did; or they can swap the person's face onto a video of another individual, also known as a face swap.

The following are some specific approaches to creating deepfakes:

- Source video deepfakes. When working from a source video, a neural network-based deepfake autoencoder analyzes the content to understand relevant attributes of the target, such as facial expressions and body language. It then imposes these characteristics onto the original video. This autoencoder includes an encoder, which encodes the relevant attributes; and a decoder, which imposes these attributes onto the target video.

- Audio deepfakes. For audio deepfakes, a GAN clones the audio of a person's voice, creates a model based on the vocal patterns and uses that model to make the voice say anything the creator wants. This technique is commonly used by video game developers.

- Lip syncing. Lip syncing is another common technique used in deepfakes. Here, the deepfake maps a voice recording to the video, making it appear as though the person in the video is speaking the words in the recording. If the audio itself is a deepfake, then the video adds an extra layer of deception. This technique is supported by recurrent neural networks.

Technology required to develop deepfakes

The development of deepfakes is becoming easier, more accurate and more prevalent as the following technologies are developed and enhanced:

- GAN neural network technology is used in the development of all deepfake content, using generator and discriminator algorithms.

- Convolutional neural networks analyze patterns in visual data. CNNs are used for facial recognition and movement tracking.

- Autoencoders are a neural network technology that identifies the relevant attributes of a target such as facial expressions and body movements, and then imposes these attributes onto the source video.

- Natural language processing is used to create deepfake audio. NLP algorithms analyze the attributes of a target's speech and then generate original text using those attributes.

- High-performance computing is a type of computing that provides the significant necessary computing power required by deepfakes.

According to the U.S Department of Homeland Security's "Increasing Threat of Deepfake Identities" report, the several tools are commonly used to generate deepfakes in a matter of seconds. Those tools include Deep Art Effects, Deepswap, Deep Video Portraits, FaceApp, FaceMagic, MyHeritage, Wav2Lip, Wombo and Zao.

How are deepfakes commonly used?

The use of deepfakes varies significantly. The main uses include the following:

- Art. Deepfakes are used to generate new music using the existing bodies of an artist's work.

- Blackmail and reputation harm. Examples of this are when a target image is put in an illegal, inappropriate or otherwise compromising situation such as lying to the public, engaging in explicit sexual acts or taking drugs. These videos are used to extort a victim, ruin a person's reputation, get revenge or simply cyberbully them. The most common blackmail or revenge use is nonconsensual deepfake porn, also known as revenge porn.

- Caller response services. These services use deepfakes to provide personalized responses to caller requests that involve call forwarding and other receptionist services.

- Customer phone support. These services use fake voices for simple tasks such as checking an account balance or filing a complaint.

- Entertainment. Hollywood movies and video games clone and manipulate actors' voices for certain scenes. Entertainment mediums use this when a scene is hard to shoot, in post-production when an actor is no longer on set to record their voice, or to save the actor and the production team time. Deepfakes are also used for satire and parody content in which the audience understands the video isn't real but enjoys the humorous situation the deepfake creates. An example is the 2023 deepfake of Dwayne "The Rock" Johnson as Dora the Explorer.

- False evidence. This involves the fabrication of false images or audio that can be used as evidence implying guilt or innocence in a legal case.

- Fraud. Deepfakes are used to impersonate an individual to obtain personally identifiable information (PII), such as bank account and credit card numbers. This can sometimes include impersonating executives of companies or other employees with credentials to access sensitive information, which is a major cybersecurity threat.

- Misinformation and political manipulation. Deepfake videos of politicians or trusted sources are used to sway public opinion and, in the case of the deepfake of Ukrainian President Volodomyr Zelenskyy, create confusion in warfare. This is sometimes referred to as the spreading of fake news.

- Stock manipulation. Forged deepfake materials are used to affect a company's stock price. For instance, a fake video of a chief executive officer making damaging statements about their company could lower its stock price. A fake video about a technological breakthrough or product launch could raise a company's stock.

- Texting. The U.S. Department of Homeland Security's "Increasing Threat of Deepfake Identities" report cited text messaging as a future use of deepfake technology. Threat actors could use deepfake techniques to replicate a user's texting style, according to the report.

Are deepfakes legal?

Deepfakes are generally legal, and there is little law enforcement can do about them, despite the serious threats they pose. Deepfakes are only illegal if they violate existing laws such as child pornography, defamation or hate speech.

Three states have laws concerning deepfakes. According to Police Chief Magazine, Texas bans deepfakes that aim to influence elections, Virginia bans the dissemination of deepfake pornography, and California has laws against the use of political deepfakes within 60 days of an election and nonconsensual deepfake pornography.

The lack of laws against deepfakes is because most people are unaware of the new technology, its uses and dangers. Because of this, victims don't get protection under the law in most cases of deepfakes.

How are deepfakes dangerous?

Deepfakes pose significant dangers despite being largely legal, including the following:

- Blackmail and reputational harm that put targets in legally compromising situations.

- Political misinformation such as nation states' threat actors using it for nefarious purposes.

- Election interference, such as creating fake videos of candidates.

- Stock manipulation where fake content is created to influence stock prices.

- Fraud where an individual is impersonated to steal financial account and other PII.

Methods to detecting deepfakes

There are several best practices for detecting deepfake attacks. The following are signs of possible deepfake content:

- Unusual or awkward facial positioning.

- Unnatural facial or body movement.

- Unnatural coloring.

- Videos that look odd when zoomed in or magnified.

- Inconsistent audio.

- People that don't blink.

In textual deepfakes, there are a few indicators:

- Misspellings.

- Sentences that don't flow naturally.

- Suspicious source email addresses.

- Phrasing that doesn't match the supposed sender.

- Out-of-context messages that aren't relevant to any discussion, event or issue.

However, AI is steadily overcoming some of these indicators, such as with tools that support natural blinking.

How to defend against deepfakes

Companies, organizations and government agencies, such as the U.S. Department of Defense's Defense Advanced Research Projects Agency, are developing technology to identify and block deepfakes. Some social media companies use blockchain technology to verify the source of videos and images before allowing them onto their platforms. This way, trusted sources are established and fakes are prevented. Along these lines, Facebook and Twitter have both banned malicious deepfakes.

Deepfake protection software is available from the following companies:

- Adobe has a system that lets creators attach a signature to videos and photos with details about their creation.

- Microsoft has AI-powered deepfake detection software that analyze videos and photos to provide a confidence score that shows whether the media has been manipulated.

- Operation Minerva uses catalogs of previously discovered deepfakes to tell if a new video is simply a modification of an existing fake that has been discovered and given a digital fingerprint.

- Sensity offers a detection platform that uses deep learning to spot indications of deepfake media in the same way antimalware tools look for virus and malware signatures. Users are alerted via email when they view a deepfake.

For more on generative AI, read the following articles:

Pros and cons of AI-generated content

AI content generators to explore

Top generative AI benefits for business

Assessing different types of generative AI applications

Generative AI challenges that businesses should consider

Generative AI ethics: 8 biggest concerns

Generative AI landscape: Potential future trends

History of generative AI innovations spans 9 decades

How to detect AI-generated content

Generative models: VAEs, GANs, diffusion, transformers, NeRFs

Notable examples of deepfakes

There are several notable examples of deepfakes, including the following:

- Facebook founder Mark Zuckerberg was the victim of a deepfake that showed him boasting about how Facebook "owns" its users. The video was designed to show how people can use social media platforms such as Facebook to deceive the public.

- U.S. President Joe Biden was the victim of numerous deepfakes in 2020 showing him in exaggerated states of cognitive decline meant to influence the presidential election. Presidents Barack Obama and Donald Trump have also been victims of deepfake videos, some to spread disinformation and some as satire and entertainment.

- During the Russian invasion of Ukraine in 2022, Ukrainian President Volodomyr Zelenskyy was portrayed telling his troops to surrender to the Russians.

History of deepfake AI technology

Deepfake AI is a relatively new technology, with origins in the manipulation of photos through programs such as Adobe Photoshop. By the mid-2010s, cheap computing power, large data sets, AI and machine learning technology all combined to improve the sophistication of deep learning algorithms.

In 2014, GAN, the technology at the heart of deepfakes, was developed by University of Montreal researcher Ian Goodfellow. In 2017, an anonymous Reddit user named "deepfakes" began releasing deepfake videos of celebrities, as well as a GAN tool that let users swap faces in videos. These went viral on the internet and in social media.

The sudden popularity of deepfake content led tech companies such as Facebook, Google and Microsoft to invest in developing tools to detect deepfakes. Despite the efforts of tech companies and governments to combat deepfakes and take on the deepfake detection challenge, the technology continues to advance and generate increasingly convincing deepfake images and videos.

Deepfake AIs are a growing threat to the enterprise. Learn why cybersecurity leaders must prepare for deepfake phishing attacks in the enterprise.