sensor

What is a sensor?

A sensor is a device that detects and responds to some type of input from the physical environment. The input can be light, heat, motion, moisture, pressure or any number of other environmental phenomena. The output is generally a signal that is converted to a human-readable display at the sensor location or transmitted electronically over a network for reading or further processing.

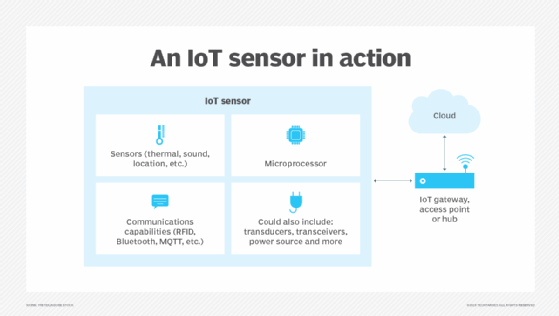

Sensors play a pivotal role in the internet of things (IoT). They make it possible to create an ecosystem for collecting and processing data about a specific environment so it can be monitored, managed and controlled more easily and efficiently. IoT sensors are used in homes, out in the field, in automobiles, on airplanes, in industrial settings and in other environments. Sensors bridge the gap between the physical world and logical world, acting as the eyes and ears for a computing infrastructure that analyzes and acts upon the data collected from the sensors.

What are the types of sensors?

Sensors can be categorized in multiple ways. One common approach is to classify them as either active or passive. An active sensor is one that requires an external power source to be able to respond to environmental input and generate output. For example, sensors used in weather satellites often require some source of energy to provide meteorological data about the Earth's atmosphere.

A passive sensor, on the other hand, doesn't require an external power source to detect environmental input. It relies on the environment itself for its power, using sources such as light or thermal energy. A good example is the mercury-based glass thermometer. The mercury expands and contracts in response to fluctuating temperatures, causing the level to be higher or lower in the glass tube. External markings provide a human-readable gauge for viewing the temperature.

Some types of sensors, such as seismic and infrared light sensors, are available in both active and passive forms. The environment in which the sensor is deployed typically determines which type is best suited for the application.

Another way in which sensors can be classified is by whether they're analog or digital, based on the type of output the sensors produce. Analog sensors convert the environmental input into output analog signals, which are continuous and varying. Thermocouples that are used in gas hot water heaters offer a good example of analog sensors. The water heater's pilot light continuously heats the thermocouple. If the pilot light goes out, the thermocouple cools, sending a different analog signal that indicates the gas should be shut off.

In contrast to analog sensors, digital sensors convert the environmental input into discrete digital signals that are transmitted in a binary format (1s and 0s). Digital sensors have become quite common across all industries, replacing analog sensors in many situations. For example, digital sensors are now used to measure humidity, temperature, atmospheric pressure, air quality and many other types of environmental phenomena.

As with active and passive sensors, some types of sensors -- such as thermal or pressure sensors -- are available in both analog and digital forms. In this case, too, the environment in which the sensor will operate typically determines which is the best option.

Sensors are also commonly categorized by the type of environmental factors they monitor. Here are some common examples:

- Accelerometer. This type of sensor detects changes in gravitational acceleration, making it possible to measure tilt, vibration and, of course, acceleration. Accelerometer sensors are used in a wide range of industries, from consumer electronics to professional sports to aerospace and aviation.

- Chemical. Chemical sensors detect a specific chemical substance within a medium (gas, liquid or solid). A chemical sensor can be used to detect soil nutrient levels in a crop field, smoke or carbon monoxide in a room, pH levels in a body of water, the amount of alcohol on someone's breath or in any number of other scenarios. For example, an oxygen sensor in a car's emission control system will monitor the gasoline-to-oxygen ratio, usually through a chemical reaction that generates voltage. A computer in the engine compartment reads the voltage and, if the mixture is not optimal, readjusts the ratio.

- Humidity. These sensors can detect the level of water vapors in the air to determine the relative humidity. Humidity sensors often include temperature readings because relative humidity is dependent on the air temperature. The sensors are used in a wide range of industries and settings, including agriculture, manufacturing, data centers, meteorology, and heating, ventilation and air conditioning (HVAC).

- Level. A level sensor can determine the level of a physical substance such as water, fuel, coolant, grain, fertilizer or waste. Motorists, for example, rely on their gas level sensors to ensure they don't end up stranded on the side of the road. Level sensors are also used in tsunami warning systems.

- Motion. Motion detectors can sense physical movement in a defined space (the field of detection) and can be used to control lights, cameras, parking gates, water faucets, security systems, automatic door openers and numerous other systems. The sensors typically send out some type of energy -- such as microwaves, ultrasonic waves or light beams -- and can detect when the flow of energy is interrupted by something entering its path.

- Optical. Optical sensors, also called photosensors, can detect light waves at different points in the light spectrum, including ultraviolet light, visible light and infrared light. Optical sensors are used extensively in smartphones, robotics, Blu-ray players, home security systems, medical devices and a wide range of other systems.

- Pressure. These sensors detect the pressure of a liquid or gas, and are used extensively in machinery, automobiles, aircraft, HVAC systems and other environments. They also play an important role in meteorology by measuring the atmospheric pressure. In addition, pressure sensors can be used to monitor the flow of gases or liquids, often so that the flow can be regulated.

- Proximity. Proximity sensors detect the presence of an object or determine the distance between objects. Proximity monitors are used in elevators, assembly lines, parking lots, retail stores, automobiles, robotics and numerous other environments.

- Temperature. These sensors can identify the temperature of a target medium, whether gas, liquid or air. Temperature sensors are used across a wide range of devices and environments, such as appliances, machinery, aircraft, automobiles, computers, greenhouses, farms, thermostats and many other devices.

- Touch. Touch sensing devices detect physical contact on a monitored surface. Touch sensors are used extensively in electronic devices to support trackpad and touchscreen technologies. They're also used in many other systems, such as elevators, robotics and soap dispensers.

The above are only some of the various types of sensors being used across environments and within devices. However, none of these categories are strictly black and white; for example, a level sensor that tracks a material's level might also be considered an optic or pressure sensor. There are also plenty of other types of sensors, such as those that can detect load, strain, color, sound and a variety of other conditions. Sensors have become so commonplace, in fact, that often their use is barely noticed.

See also: smart sensor, sensor data, spatial sensing, proximity sensing, CMOS sensor, sensor analytics, pressure sensor, collision sensor, wireless sensor network, industrial internet of things, sensor hub.